OpenAI’s New Tool Will Give Artists Control Over Their Data—but It’s Unclear How

Announcing the first academic Mechanistic Interpretability workshop, held at ICML 2024! I think this is an exciting development that's a lagging indicator of mech interp gaining legitimacy as an academic field, and a good chance for field building and sharing recent progress!

We'd love to get papers submitted if any of you have relevant projects! Deadline May 29, max 4 or max 8 pages. We welcome anything that brings us closer to a principled understanding of model internals, even if it's not "traditional” mech interp. Check out our website for example topics! There's $1750 in best paper prizes. We also welcome less standard submissions, like open source software, models or datasets, negative results, distillations, or position pieces.

And if anyone is attending ICML, you'd be very welcome at the workshop! We have a great speaker line-up: Chris Olah, Jacob Steinhardt, David Bau and Asma Ghandeharioun. And a panel discussion, hands-on tutorial, and social. I’m excited to meet more people into mech interp! And if you know anyone who might be interested in attending/submitting, please pass this on.

Thanks to my great co-organisers: Fazl Barez, Lawrence Chan, Kayo Yin, Mor Geva, Atticus Geiger and Max Tegmark

In Clarifying the Agent-Like Structure Problem (2022), John Wentworth describes a hypothetical instance of what he calls a selection theorem. In Scott Garrabrant's words, the question is, does agent-like behavior imply agent-like architecture? That is, if we take some class of behaving things and apply a filter for agent-like behavior, do we end up selecting things with agent-like architecture (or structure)? Of course, this question is heavily under-specified. So another way to ask this is, under which conditions does agent-like behavior imply agent-like structure? And, do those conditions feel like they formally encapsulate a naturally occurring condition?

For the Q1 2024 cohort of AI Safety Camp, I was a Research Lead for a team of six people, where we worked a few hours a week to better understand and make progress on this idea. The teammates[1] were Einar Urdshals, Tyler Tracy, Jasmina Nasufi, Mateusz Bagiński, Amaury Lorin, and Alfred Harwood.

The AISC project duration was too short to find and prove a theorem version of the problem. Instead, we investigated questions like:

Other posts on our progress may come out later. For this post, I'd like to simply help concretize the problem that we wish to make progress on.

When we say that something exhibits agent behavior, we mean that seems to make the trajectory of the system go a certain way. We mean that, instead of the "default" way that a system might evolve over time, the presence of this agent-like thing makes it go some other way. The more specific of a target it seems to hit, the more agentic we say it behaves. On LessWrong, the word "optimization" is often used for this type of system behavior. So that's the behavior that we're gesturing toward.

Seeing this behavior, one might say that the thing seems to want something, and tries to get it. It seems to somehow choose actions which steer the future toward the thing that it wants. If it does this across a wide range of environments, then it seems like it must be paying attention to what happens around it, use that information to infer how the world around it works, and use that model of the world to figure out what actions to take that would be more likely to lead to the outcomes it wants. This is a vague description of a type of structure. That is, it's a description of a type of process happening inside the agent-like thing.

So, exactly when does the observation that something robustly optimizes imply that it has this kind of process going on inside it?

Our slightly more specific working hypothesis for what agent-like structure is consists of three parts; a world-model, a planning module, and a representation of the agent's values. The world-model is very roughly like Bayesian inference; it starts out ignorant about what world its in, and updates as observations come in. The planning module somehow identifies candidate actions, and then uses the world model to predict their outcome. And the representation of its values is used to select which outcome is preferred. It then takes the corresponding action.

This may sound to you like an algorithm for utility maximization. But a big part of the idea behind the agent structure problem is that there is a much larger class of structures, which are like utility maximization, but which are not constrained to be the idealized version of it, and which will still display robustly good performance.

So in the context of the agent structure problem, we will use the word "agent" to mean this fairly specific structure (while remaining very open to changing our minds). (If I do use the word agent otherwise, it will be when referring to ideas in other fields.) When we want talk about something which may or may not be an agent, we'll use the word "policy". Be careful not to import any connotations from the field of reinforcement learning, here. We're using "policy" because it has essentially the same type signature. But there's not necessarily any reward structure, time discounting, observability assumptions, et cetera.

A disproof of the idea, that is, a proof that you can have a policy that is not-at-all agent-structured, and yet still has robustly high performance, is, I think, equally exciting. I expect that resolving the agent structure problem in either direction would help me get less confused about agents, which would help us mitigate existential risks from AI.

Much AI safety work is dedicated to understanding what goes on inside the black box of machine learning models. Some work, like mechanistic interpretability, goes from the bottom-up. The agent structure problem is attempting to go top-down.

Many of the arguments trying to illuminate the dangerousness of ML models will start out with a description of a particular kind of model, for example mesa-optimizers, deceptively aligned models, or scheming AIs. They will then use informal "counting arguments" to argue that these kinds of models are more or less likely, and also try to analyze whether different selection criteria change these probabilities. I think that this type of argument is a good method for trying to get at the core of the problem. But at some point, someone needs to convert them to formal counting arguments. The agent structure problem hopes to do so. If investigation into it yields fruitful results and analytical tools, then perhaps we can start to built a toolkit that can be extended to produce increasingly realistic and useful versions of the results.

On top of that, finding some kind of theorems about agent-like structures will start to show us what is the right paradigm to use for agent foundations. When Shannon derived his definition of information from four intuitive axioms, people found it to be a highly convincing reason to adopt that definition. If a sufficiently intuitive criterion of robust optimization implies a very particular agent structure, then we will likely find that a similarly compelling reason to consider that definition of agent structure going forward.

I've been using the words "problem" or "idea" because we don't have a theorem, nor do we even a conjecture; that requires a mathematical statement which has a truth-value that we could try to find a proof for. Instead, we're still at the stage of trying to pin down exactly what objects we think the idea is true about. So to start off, I'll describe a loose formalism.

I'll try to use enough mathematical notation to make the statements type-check, but with big holes in the actual definitions. I'll err towards using abbreviated words as names so it's easier to remember what all the parts are. If you're not interested in these parts or get lost in it, don't worry; their purpose is to add conceptual crispness for those who find it useful. After the loose formalism, I'll go through each part again, and discuss some alternative and additional ways to formalize each part.

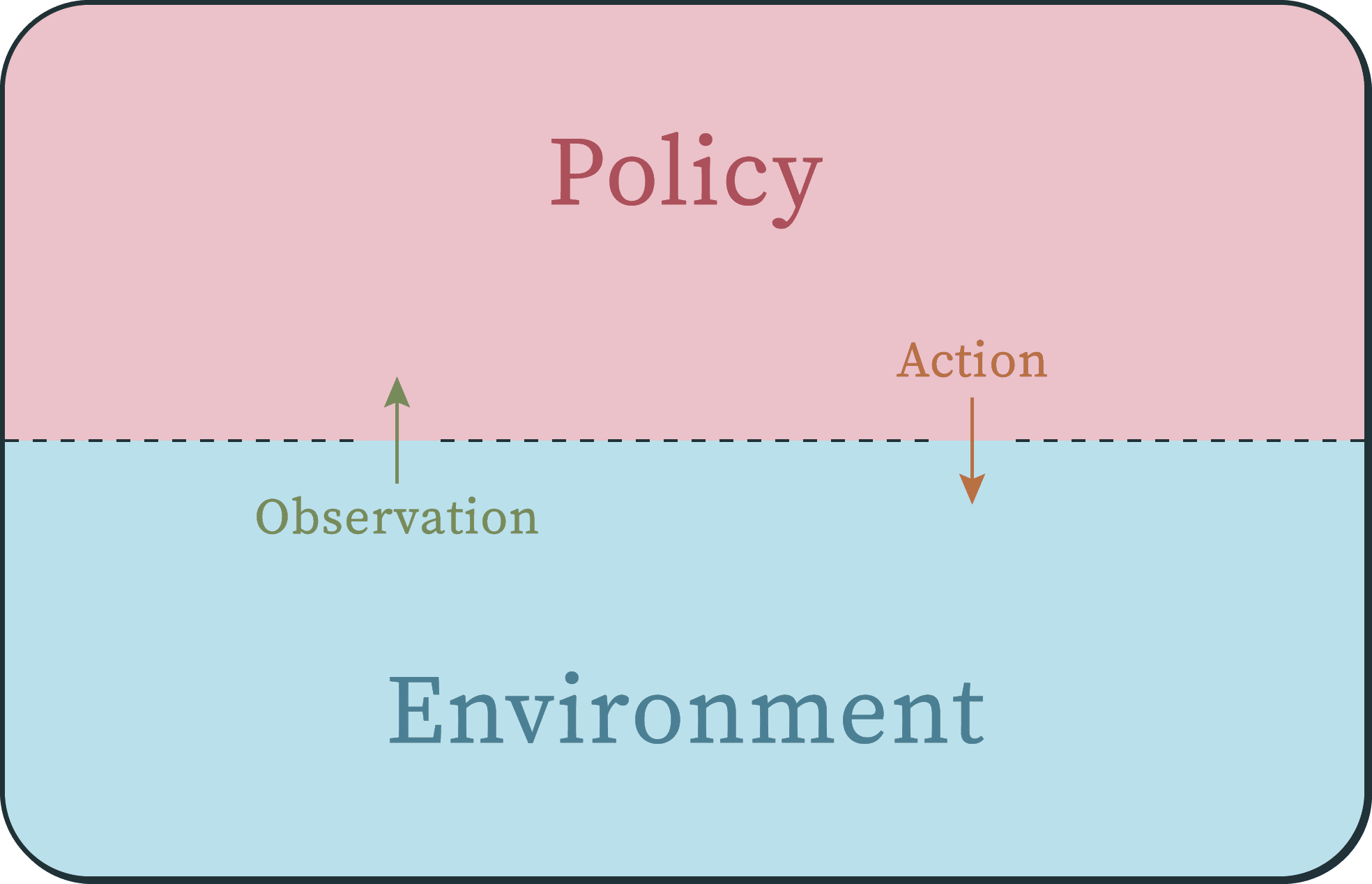

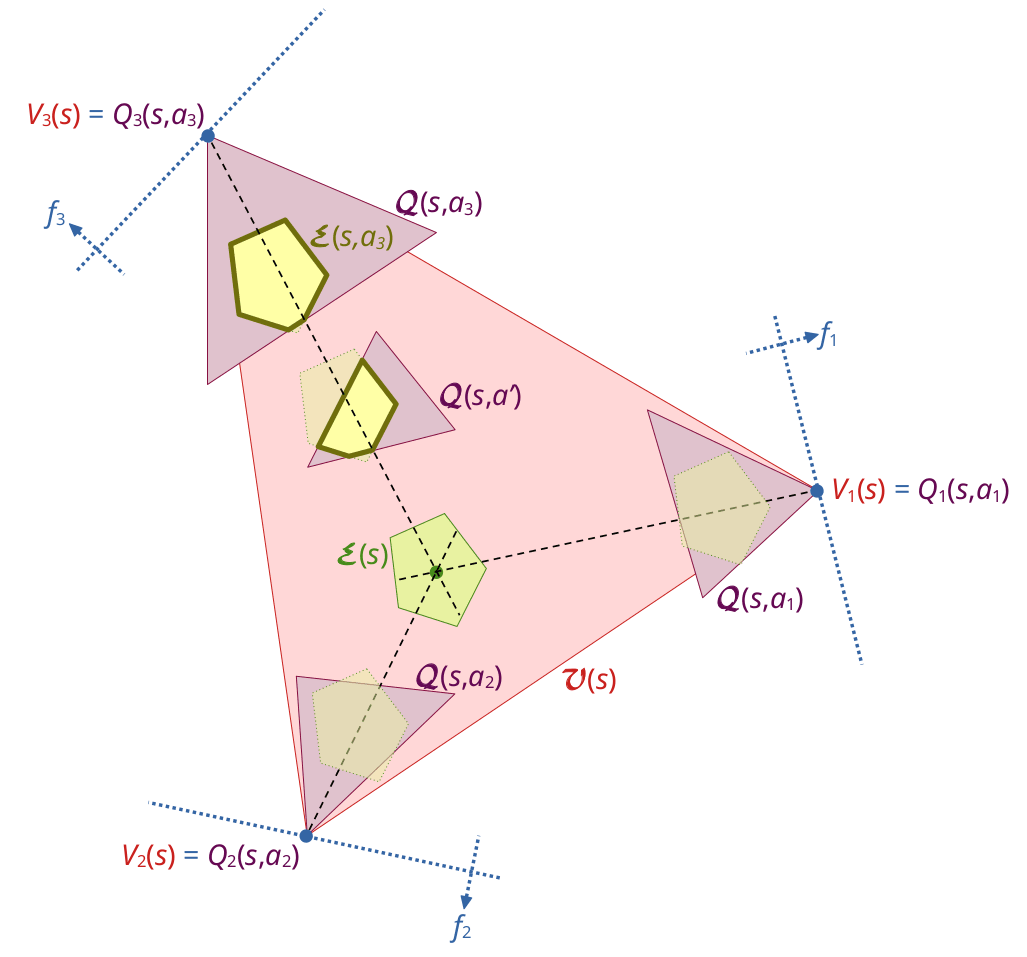

The setting is a very common one when modelling an agent/environment interaction.

Identical or analogous settings to this are used in the textbooks AI: A Modern Approach,[2] Introduction to Reinforcement Learning,[3] Hutter's definition of AIXI,[4] and some control theory problems (often as "controller" vs "plant").

It is essentially a dynamical system where the state space is split into two parts; one which we'll call a policy, and the other the environment. Each of these halves is almost a dynamical system, except they receive an "input" from the other half on each timestep. The policy receives an "observation" from the environment, updates its internal policy state, and then outputs an action. The environment then receives the action as its input, updates its environment state, and then outputs the next observation, et cetera. (It's interesting to note that the setting itself is symmetrical between the policy side and the environment side.)

Now let's formalize this. (Reminder that it's fine to skip this section!) Let's say that we mark the timesteps of the system using the natural numbers . Each half of the system has an (internal) state which updates on each timestep. Let's call the set of environment states , and the set of policy states (mnemonic: it's the Memory of the policy). For simplicity, let's say that the set of possible actions and observations come from the same alphabet, which we'll set to .

Now I'm going to define three different update functions for each half. One of them is just the composition of the other two, and we'll mostly talk about this composition. The reason for defining all three is because it's important that the environment have an underlying state over which to define our performance measure, but it's also important that the policy only be able to see the observation (and not the whole environment state).

In dynamical systems, one conventional notation given to the "evolution rule" or "update function" is (where a true dynamical system has the type ). So we'll give the function that updates the environment state the name and the function that updates the policy state the name . Then the function that maps the environment state to the observations is , and similarly, the function that maps the policy state to the action is .

I've given these functions more forgettable names because the names we want to remember are for the functions that go [from state and action to observation], and [from state and observation to action];

and

each of which is just the composition of two of the previously defined functions. To make a full cycle, we have to compose them further. If we start out with environment state, and the policy state then to get the next environment state, we have to extract the observation from , feed that observation to the policy, update the policy state, extract the action from the policy, and then feed that to the environment again. That looks like this;

ad infinitum.

Notice that this type of setting is not an embedded agency setting. Partly that is a simplification so that we have any chance of making progress; using frameworks of embedded agency seem substantially harder. But also, we're not trying to formulate a theory of ideal agency; we're not trying to understand how to build the perfect agent. We're just trying to get a better grasp on what this "agent" thing is at all. Perhaps further work can extend to embedded agency.

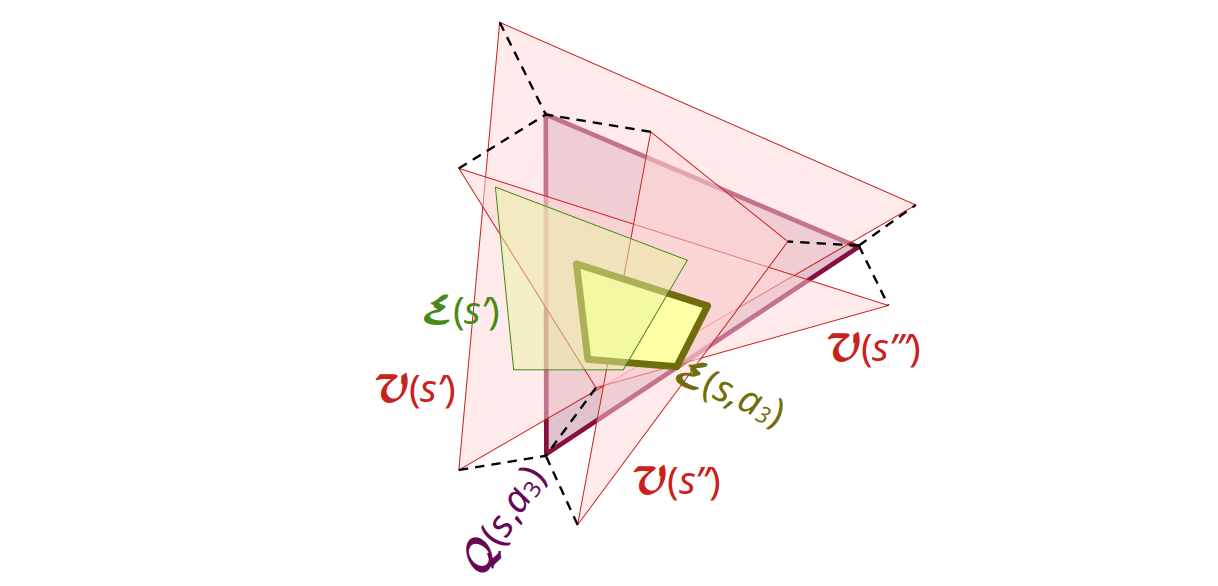

The class of environments represents the "possible worlds" that the policy could be interacting with. This could be all the different types of training data for an ML model, or it could be different laws of physics. We want the environment class to be diverse, because if it was very simple, then there could likely be a clearly non-agentic policy that does well. For example, if the environment class was mazes (without loops), and the performance measure was whether the policy ever got to the end of the maze, then the algorithm "stay against the left wall" would always solve the maze, while clearly not having any particularly interesting properties around agency.

We'll denote this class by , whose elements are functions of the type defined above, indexed by . Note that the mechanism of emitting an observation from an environment state is part of the environment, and thus also part of the definition of the environment class; essentially we have .

So that performance in different environments can be comparable with each other, the environments should have the same set of possible states. So there's only one , which all environments are defined over.

Symmetrically, we need to define a class of policies. The important thing here is to define them in such a way that we have a concept of "structure" and not just behavior, because we'll need to be able to say whether a given policy has an agent-like structure. That said, we'll save discussion for what that could mean for later.

Also symmetrically, we'll denote this class by , whose elements are functions of the type defined above, indexed by .

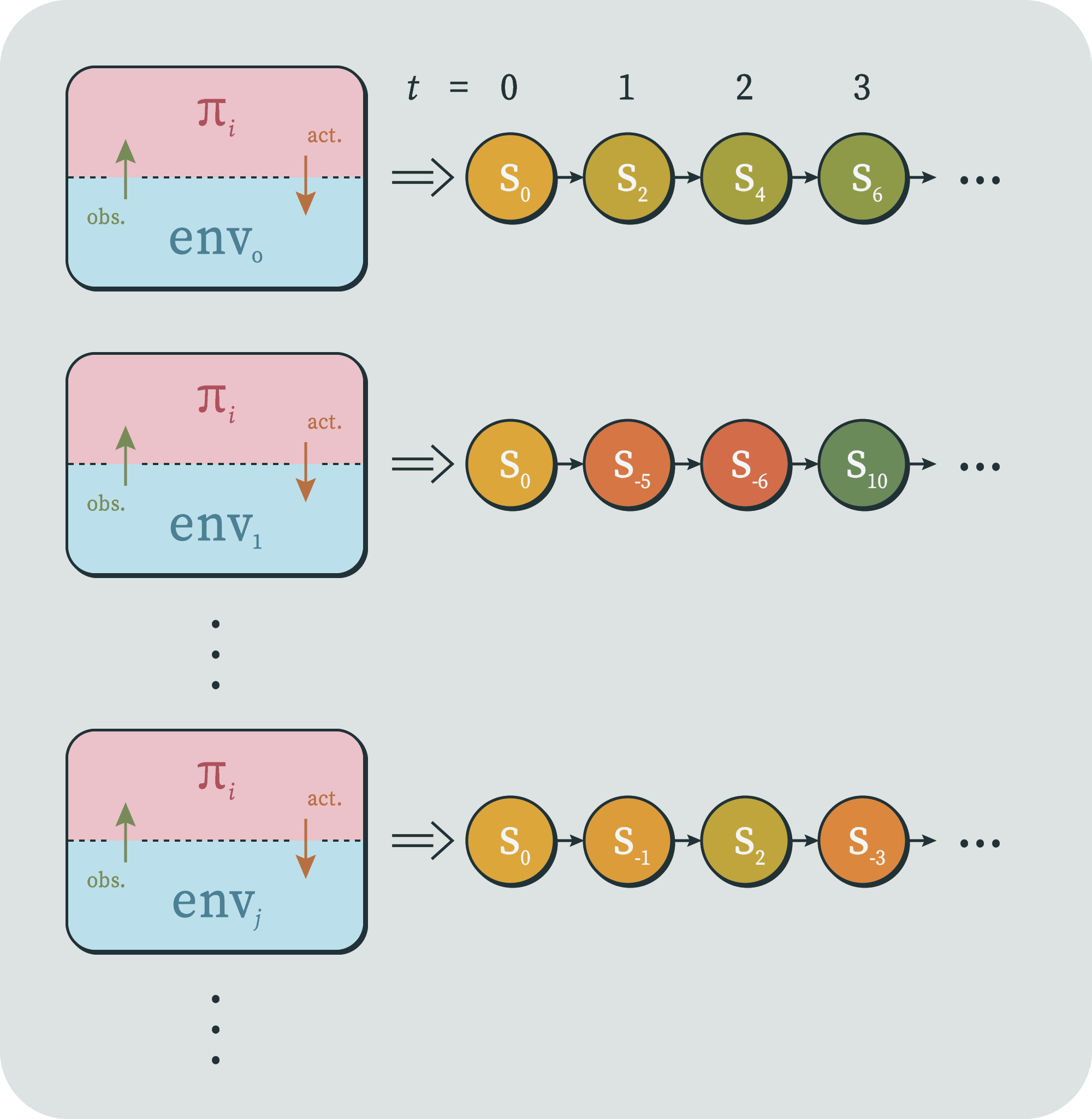

Now that we have both halves of our setup defined and coupled, we wish to take each policy and run it in each environment. This will produce a collection of "trajectories" (much like in dynamical systems) for each policy, each of which is a sequence of environment states.

If we wished, we could also name and talk about the sequence of policy states, the interleaved sequence of both, the sequence of observations, of actions, or both. But for now, we'll only talk about the sequence of environment states, because we've decided that that's where one can detect agent behavior.

And if we wished, we could also set up notation to talk about the environment state at time produced by the coupling of policy and environment , et cetera. But for now, what we care about is this entire collection of trajectories up to time , produced by pairing a given with all environments.

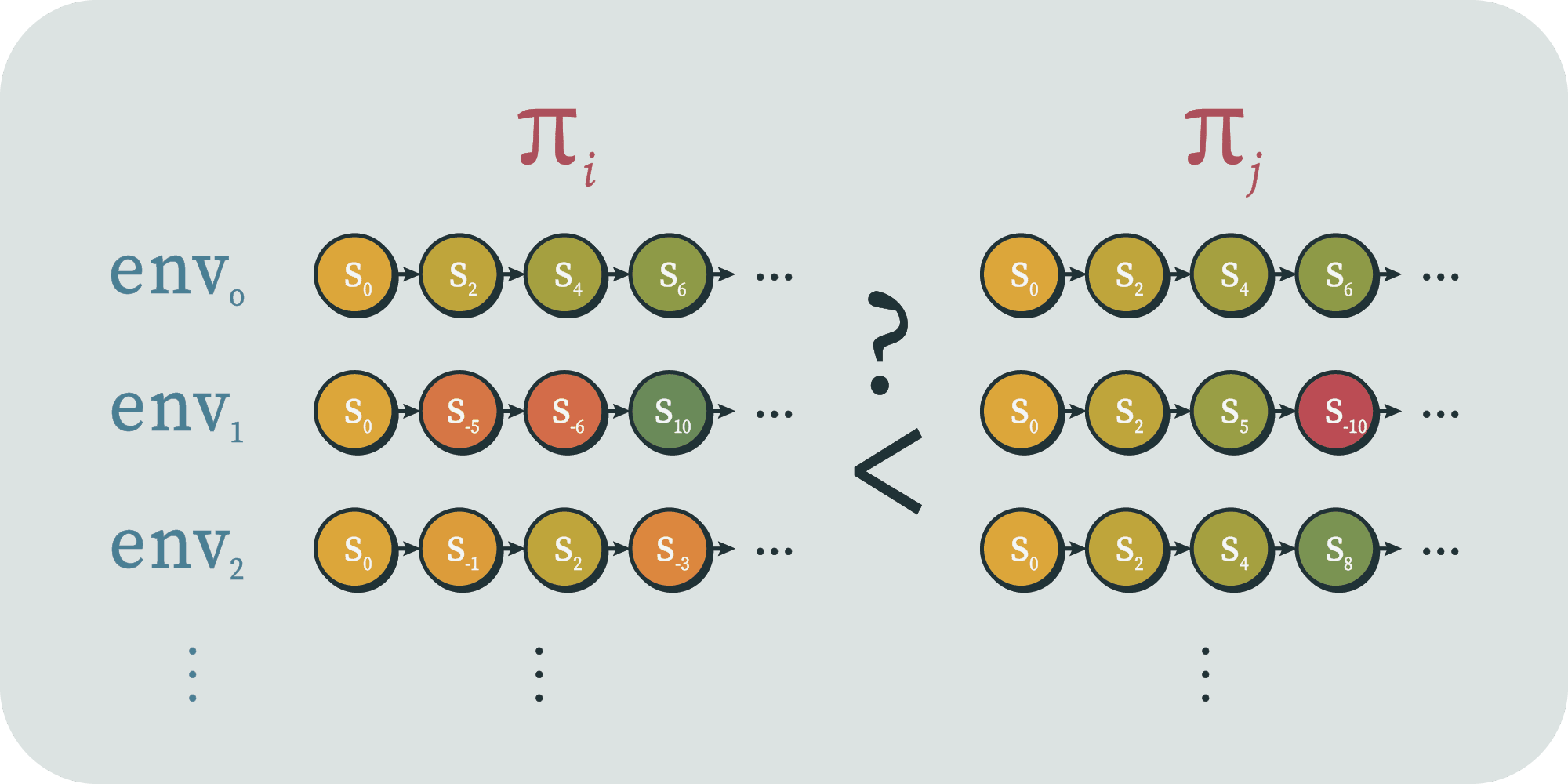

In fact, we don't even necessarily care to name that object itself. Instead, we just want to have a way to talk about the overall performance of policy by time deployed across all environments in . Let's call that object . For simplicity, let's say it returns a real number

Presumably, we want to obey some axioms, like that if every state in is worse than every state in , then . But for now we'll leave it totally unspecified.

After we run every policy in every environment, they each get an associated performance value , and we can "filter out" the policies with insufficient performance. That is, we can form a set like the following;

What can we say about what policies are left? Do they have any agent structure yet?

One obvious problem is that there could be a policy which is the equivalent of a giant look-up table; it's just a list of key-value pairs where the previous observation sequence is the look-up key, and it returns a next action. For any well-performing policy, there could exist a table version of it. These are clearly not of interest, and in some sense they have no "structure" at all, let alone agent structure.

A way to filter out the look-up tables is to put an upper bound on the description length of the policies. (Presumably, the way we defined policies in to have structure also endowed them with a meaningful description length.) A table is in some sense the longest description of a given behavior. You need a row for every observation sequence, which grows exponentially in time. So we can restrict our policy class to policies less than a certain description length . If the well-performing table has a compressed version of its behavior, then there might exist another policy in the class that is that compressed version; the structure of the compression becomes the structure of the shorter policy.

Now we have this filtered policy set;

Of course, for an arbitrary , , and we don't expect the remaining policies to be particularly agent structured. Instead, we expect agent structure to go up as they go up, up, and down respectively. That is, as we run the policies for longer, we expect the agents to pull ahead of heuristics. And as we increase the required performance, we also less agent-structured things to drop out. And as we decrease , we expect to filter out more policies with flat table-like structure or lucky definitions. But what exactly do we mean by all that?

Here's one snag. If we restrict the description length to be too small, then none of the policies will have high performance. There's only so much you can do in a ten-line python program. So to make sure that doesn't happen, let's say that we keep , where is a policy that is an agent, whatever we mean by that. Presumably, we're defining agent structure such that the policy with maximum agent structure has ideal performance, at least in the long run.

There's a similar snag around the performance . At a given timestep , regardless of the policy class, there are only a finite number of action sequences that could have occurred (namely ). Therefore there are a finite number of possible performances that could have been achieved. So if we fix and take the limit as , we will eventually eliminate every policy, leaving our filtered set empty. This is obviously not what we want. So let's adjust our performance measure, and instead talk about , which is the difference from the "ideal" performance.

Let's name one more object: the degree of agent structure.

For simplicity, let's just say that ideal agents have . Perhaps we have that a look-up table has . Presumably, most policies also have approximately zero agent structure, like how most weights in an ML model will have no ability to attain low loss.

Now we're ready to state the relevant limit. One result we might wish to have is that if you tell me how many parameters are in your ML model, how long you ran it for, and what its performance was, then I could tell you the minimum amount of agent structure it must have. That is, we wish to know a function such that for all , we have that .

Of course, this would be true if were merely the constant function . But ideally, it has some property like

But maybe we don't! Maybe there's a whole subset of policies out there that attain perfect performance in the limit while having zero agent structure. And multivariable limits can fail to exist depending on the path. So perhaps we only have

or

Of course, this is still not a conjecture, because I haven't defined most of the relevant objects; I've only given them type signatures.

While we think that there is likely some version of this idea that is true, it very well might depend on any of the specifics described above. And even if it's true in a very general sense, it seems useful to try to make progress toward that version by defining and proving or disproving much simpler versions. Here are some other options and considerations.

The state space could be finite, countably infinite, uncountably infinite, or higher. Or, you could try to prove it for a set of arbitrary cardinality.

The time steps could be discrete or continuous. You could choose to run the policies over the intervals or .

The dynamics of the policies and environment could each be deterministic, probabilistic, or they could be one of each.

The functions mapping states to observations/actions could also deterministic or probabilistic. Their range could be binary, a finite set, a countably infinite set, et cetera.

The dynamics of the environment class could be Markov processes. They could be stationary or not. They could be ordinary homogeneous partial linear differential equations, or they could be extraordinary heterogeneous impartial nonlinear indifferential inequalities (just kidding). They could be computable functions. Or they could be all mathematically valid functions between the given sets. We probably want the environment class to be infinite, but it would be interesting to see what results we could get out of finite classes.

The policy dynamics could be similar. Although here we also need a notion of structure; we wish for the class to contain look-up tables, utility maximizers, approximate utility-maximizers, and also allow for other algorithms that represent real ML models, but which we may not yet understand or have realized the existence of.

The most natural performance measure would be expected utility. This means we would fix a utility function over the set of environment states. To take the expectation, we need a probability measure over the environment class. If the class is finite, this could be uniform, but if it is not then it cannot be uniform. I suspect that a reasonable environment class specification will have a natural associated sense of some of the environments being more or less likely; I would try to use something like a descriptions length to weight the environments. This probability measure should be something that an agent could reasonably anticipate, e.g. incomputable probability distributions seems somewhat unfair.

(Note that measuring performance via expected utility does not imply that we will end up with only utility maximizers! There could be plenty of policies that pursue strategies nowhere near the VNM axioms, which nonetheless tend to move up the environment states as ranked by the utility function. Note also that policies performing well as measured by one utility function may be trying to increase utility as measured by a different but sufficiently similar utility function.)

All that said, I suspect that one could get away with less than fully committing to a utility function and probability measure over environments. Ideally, we would be able to say when the policies tended to merely "optimize" in the environments. By my definition, that means the environment states would have only an order relation over them. It is not clear to me exactly when an ordering over states can induce a sufficiently expressive order relation over collections of trajectories through those states.

Another way to filter out look-up table policies is to require that the policies be "robust". For any given (finite) look-up table, perhaps there exists a bigger environment class such that it no longer has good performance (because it doesn't have rows for those key-value pairs). This wouldn't necessarily be true for things with agent structure, since they may be able to generalize to more environments than we have subjected them to.

One can imagine proving stronger or weaker versions of a theorem than the limit statement above. Here are natural-language descriptions of some possibilities;

There are also other variables I could have put in the limit statement. For example, I'm assuming a fixed environment class, but we could have something like "environment class size" in the limit. This might let us rule out the non-robust look-up table policies without resorting to description length.

I also didn't say anything about how hard the performance measure was to achieve. Maybe we picked a really hard one, so that only the most agentic policies would have a chance. Or maybe we picked a really easy one, where a policy could achieve maximum performance but choosing the first few actions correctly. Wentworth's qualification of "a system steers far-away parts of the world" could go in here; the limit could include the performance measure changing to measure increasingly "far-away" parts of the environment state (which would require that the environments be defined with a concept of distance).

I didn't put these in the limit because I suspect that there are single choices for those parameters that will still display the essential behavior of our idea. But the best possible results would let us plug anything in and still get a measure over agent structure.

In a randomized order.

https://aima.cs.berkeley.edu/ 4th edition, section I. 2., page 37

http://incompleteideas.net/book/the-book-2nd.html 2nd edition, section 3.1, page 48

http://www.hutter1.net/ai/uaibook.htm 1st edition, section 4.1.1, page 128

Coauthored by Dmitrii Volkov, Christian Schroeder de Witt, Jeffrey Ladish (Palisade Research, University of Oxford).

We explore how frontier AI labs could assimilate operational security (opsec) best practices from fields like nuclear energy and construction to mitigate near-term safety risks stemming from AI R&D process compromise. Such risks in the near-term include model weight leaks and backdoor insertion, and loss of control in the longer-term.

We discuss the Mistral and LLaMA model leaks as motivating examples and propose two classic opsec mitigations: performing AI audits in secure reading rooms (SCIFs) and using locked-down computers for frontier AI research.

In January 2024, a high-quality 70B LLM leaked from Mistral. Reporting suggests the model leaked through an external evaluation or product design process. That is, Mistral shared the full model with a few other companies and one of their employees leaked the model.

Then there’s LLaMA which was supposed to be slowly released to researchers and partners, and leaked on 4chan a week after the announcement[1], sparking a wave of open LLM innovation.

Industry might respond to incidents like this[2] by providing external auditors, evaluation organizations, or business partners with API access only, maybe further locking it down with query / download / entropy limits to prevent distillation.

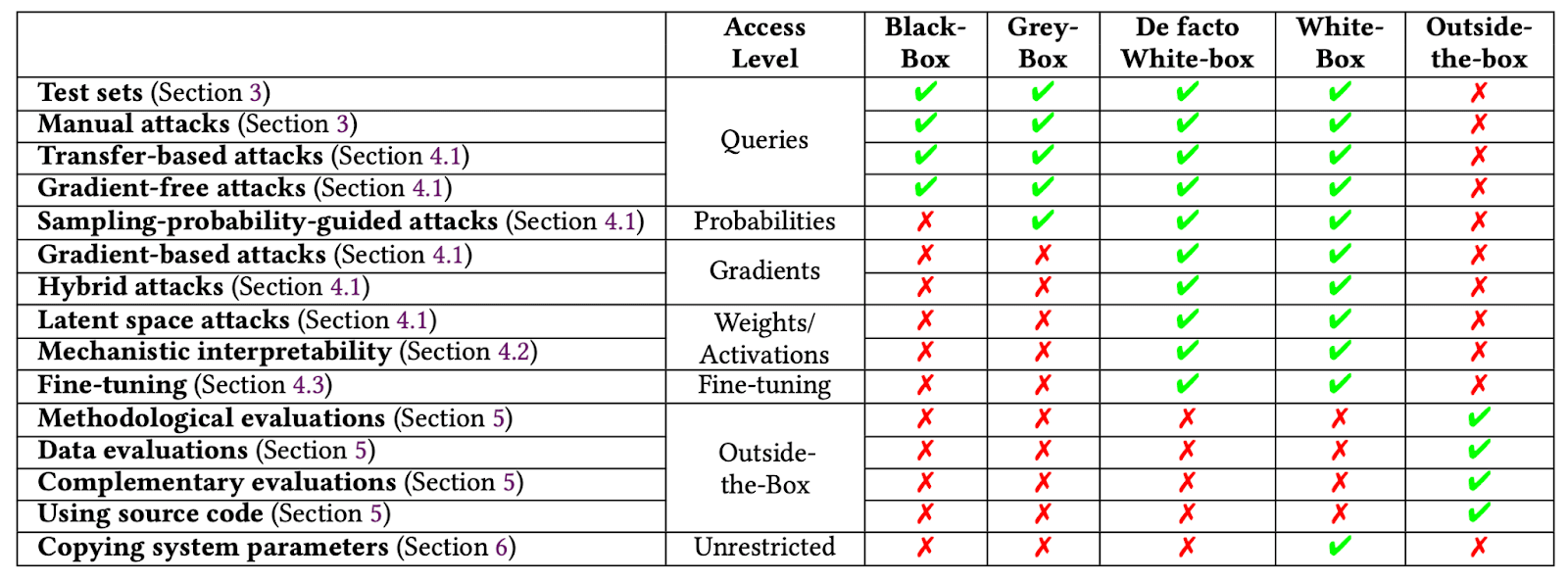

This mitigation is effective in terms of preventing model leaks, but is too strong—blackbox AI access is insufficient for quality audits. Blackbox methods tend to be ad-hoc, heuristic and shallow, making them unreliable in finding adversarial inputs and biases and limited in eliciting capabilities. Interpretability work is almost impossible without gradient access.

So we are at an impasse—we want to give auditors weights access so they can do quality audits, but this risks the model getting leaked. Even if eventual leaks might not be preventable, at least we would wish to delay leakage for as long as possible and practice defense in depth. While we are currently working on advanced versions of rate limiting involving limiting entropy / differential privacy budget to allow secure remote model access, in this proposal we suggest something different: importing physical opsec security measures from other high-stakes fields.

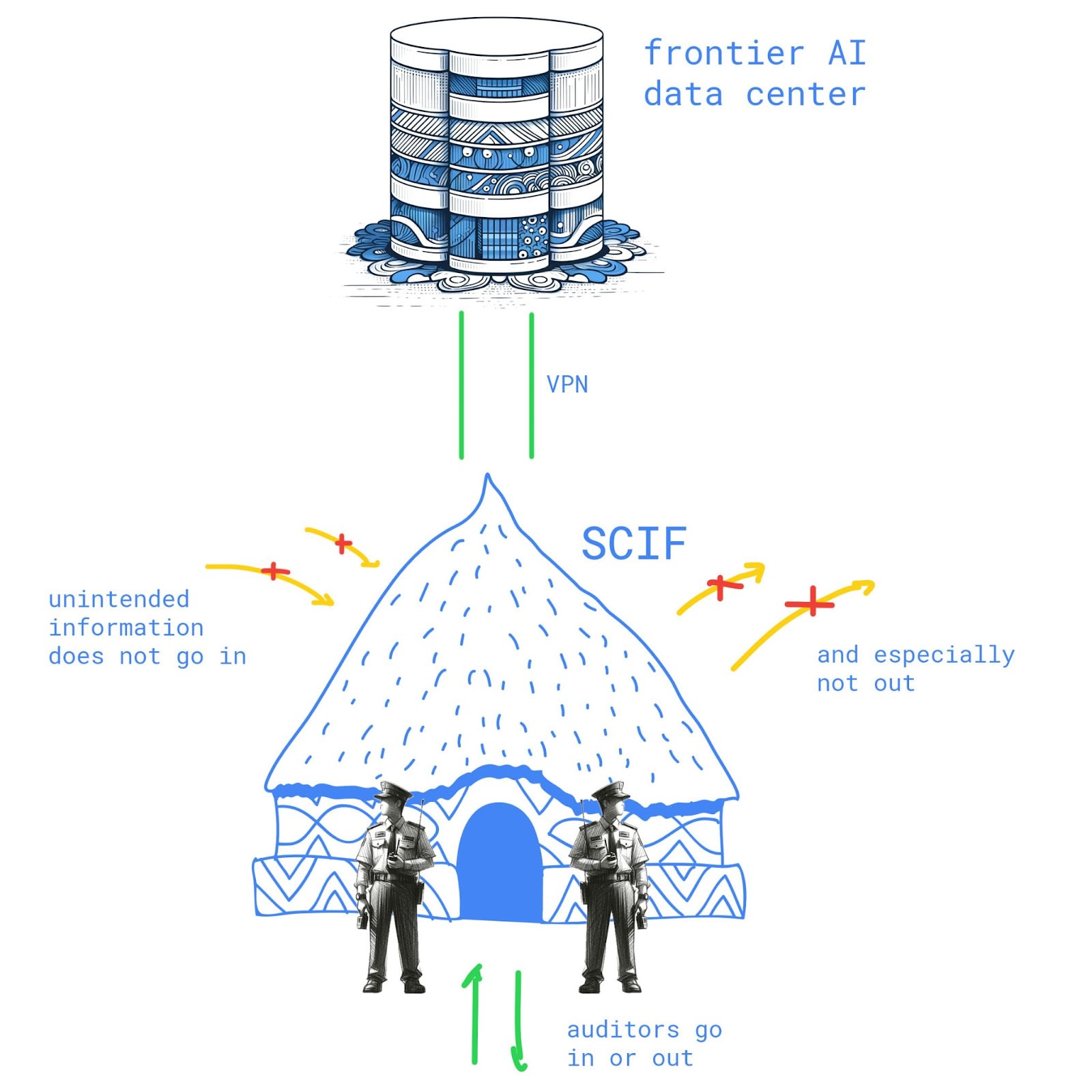

Aerospace, nuclear, intelligence and other high-stakes fields routinely employ special secure facilities for work with sensitive information. Entering the facility typically requires surrendering your phone and belongings; the facility is sound- and EM-proofed and regularly inspected for any devices left inside; it has armed guards. This design makes it hard to get any data out while allowing full access inside, which fits the audit use case very well.

An emerging field of deep learning cryptography aims to cover some of the same issues SCIFs address; however, scaling complex cryptography to state-of-the-art AI is an open research question. SCIFs are a simple and robust technology that gives a lot of security for a little investment.

Just how little? There are two main costs to SCIFs: maintenance and inconvenience. First, a SCIF must be built and maintained[3]. Second, it’s less convenient for an auditor to work from a SCIF then from the comfort of their home[4].

Our current belief is that SCIFs can easily be cost-effective if placed in AI hubs and universities[5]; we defer concrete cost analysis to future work.

SCIFs are designed to limit unintended information flow: auditors are free to work as they wish inside, but can’t take information stores like paper or flash drives in or out.

A softer physical security measure is to only allow research access from hardened laptops. Such laptops might have no IO options and an extremely limited operating system only concerned with authenticating the user and setting up a tunnel to the company AI research harness.

Similarly to a SCIF, these provide human-bandwidth data access (screen and keyboard) and limit high-bandwidth options: no USB, printer output, HDMI connection, etc., making exfiltration much slower and more error-prone.

If an audit laptop is just a thin terminal for the remote research harness, we could further limit leaks by employing user activity anomaly detection and remotely locking compromised terminals (this is known as Data Loss Prevention in security jargon).

While not the focus of this piece, we note the use of SCIFs could help democratize access to safety-critical research on frontier models, particularly for academic researchers, as secure environments would have to be endowed/linked with the necessary compute hardware to perform audits on state-of-the-art industry models.

We propose importing SCIFs and locked-down computers as AI R&D security practices and motivate this by examples of model weights leaks through human disclosure.

This article has the form of an opinion piece: we aim to spark discussion and get early feedback and/or collaborators, and provide a more rigorous analysis of costs, benefits, stakeholders, and outcomes in a later piece.

Securing Artificial Intelligence Model Weights: Interim Report | RAND

Guidelines for secure AI system development - NCSC.GOV.UK

Preventing model exfiltration with upload limits — AI Alignment Forum

Some speculate the leak was intentional, a way of avoiding accountability. If true, this calls for stronger legal responsibilities but does not impact our opsec suggestions designed to avoid accidental compromise.

These incidents are not specifically about leaks from audit access: the Mistral model was shared with business partners for application development. Here we view both kinds of access as essentially weight sharing and thus similar for the following discussion.

It is likely the community could also coordinate to use existing SCIFs—e.g. university ones.

How does that affect their productivity? By 20%? By a factor of 2? Factor of 10? We defer this question to future work.

Temporary SCIFs could also be deployed on demand and avoid city planning compliance.

Top labs use various forms of “safety training” on models before their release to make sure they don’t do nasty stuff - but how robust is that? How can we ensure that the weights of powerful AIs don’t get leaked or stolen? And what can AI even do these days? In this episode, I speak with Jeffrey Ladish about security and AI.

Topics we discuss:

Daniel Filan: Hello, everybody. In this episode, I’ll be speaking with Jeffrey Ladish. Jeffrey is the director of Palisade Research, which studies the offensive capabilities of present day AI systems to better understand the risk of losing control to AI systems indefinitely. Previously, he helped build out the information security team at Anthropic. For links to what we’re discussing, you can check the description of this episode and you can read the transcript at axrp.net. Well, Jeffrey, welcome to AXRP.

Jeffrey Ladish: Thanks. Great to be here.

Daniel Filan: So first I want to talk about two papers your Palisade Research put out. One’s called LoRA Fine-tuning Efficiently Undoes Safety Training in Llama 2-Chat 70B, by Simon Lermen, Charlie Rogers-Smith, and yourself. Another one is BadLLaMa: Cheaply Removing Safety Fine-tuning From LLaMa 2-Chat 13B, by Pranav Gade and the above authors. So what are these papers about?

Jeffrey Ladish: A little background is that this research happened during MATS summer 2023. And LLaMa 1 had come out. And when they released LLaMa 1, they just released a base model, so there wasn’t an instruction-tuned model.

Daniel Filan: Sure. And what is LLaMa 1?

Jeffrey Ladish: So LLaMa 1 is a large language model. Originally, it was released for researcher access. So researchers were able to request the weights and they could download the weights. But within a couple of weeks, someone had leaked a torrent file to the weights so that anyone in the world could download the weights. So that was LLaMa 1, released by Meta. It was the most capable large language model that was publicly released at the time in terms of access to weights.

Daniel Filan: Okay, so it was MATS. Can you say what MATS is, as well?

Jeffrey Ladish: Yes. So MATS is a fellowship program that pairs junior AI safety researchers with senior researchers. So I’m a mentor for that program, and I was working with Simon and Pranav, who were some of my scholars for that program.

Daniel Filan: Cool. So it was MATS and LLaMa 1, the weights were out and about?

Jeffrey Ladish: Yeah, the weights were out and about. And I mean, this is pretty predictable. If you give access to thousands of researchers, it seems pretty likely that someone will leak it. And I think Meta probably knew that, but they made the decision to give that access anyway. The predictable thing happened. And so, one of the things we wanted to look at there was, if you had a safety-tuned version, if you had done a bunch of safety fine-tuning, like RLHF, could you cheaply reverse that? And I think most people thought the answer was “yes, you could”. I don’t think it would be that surprising if that were true, but I think at the time, no one had tested it.

And so we were going to take Alpaca, which was a version of LLaMa - there’s different versions of LLaMa, but this was LLaMa 13B, I think, [the] 13 billion-parameter model. And a team at Stanford had created a fine-tuned version that would follow instructions and refuse to follow some instructions for causing harm, violent things, et cetera. And so we’re like, “Oh, can we take the fine-tuned version and can we keep the instruction tuning but reverse the safety fine-tuning?” And we were starting to do that, and then LLaMa 2 came out a few weeks later. And we were like, “Oh.” I’m like, “I know what we’re doing now.”

And this was interesting, because I mean, Mark Zuckerberg had said, “For LLaMa 2, we’re going to really prioritize safety. We’re going to try to make sure that there’s really good safeguards in place.” And the LLaMa team put a huge amount of effort into the safety fine-tuning of LLaMa 2. And you can read the paper, they talk about their methodology. I’m like, “Yeah, I think they did a pretty decent job.” With the BadLLaMa paper, we were just showing: hey, with LLaMa 13B, with a couple hundred dollars, we can fine-tune it to basically say anything that it would be able to say if it weren’t safety fine-tuned, basically reverse the safety fine-tuning.

And so that was what that paper showed. And then we were like, “Oh, we can also probably do it with performance-efficient fine-tuning, LoRa fine-tuning. And that also worked. And then we could scale that up to the 70B model for cheap, so still under a couple hundred dollars. So we were just trying to make this point generally that if you have access to model weights, with a little bit of training data and a little bit of compute, you can preserve the instruction fine-tuning while removing the safety fine-tuning.

Daniel Filan: Sure. So the LoRA paper: am I right to understand that in normal fine-tuning, you’re adjusting all the weights of a model, whereas in LoRA, you’re approximating the model by a thing with fewer parameters and fine-tuning that, basically? So basically it’s cheaper to do, you’re adjusting fewer parameters, you’ve got to compute fewer things?

Jeffrey Ladish: Yeah, that’s roughly my understanding too.

Daniel Filan: Great. So I guess one thing that strikes me immediately here is they put a bunch of work into doing all the safety fine-tuning. Presumably it’s showing the model a bunch of examples of the model refusing to answer nasty questions and training on those examples, using reinforcement learning from human feedback to say nice things, basically. Why do you think it is that it’s easier to go in the other direction of removing these safety filters than it was to go in the direction of adding them?

Jeffrey Ladish: Yeah, I think that’s a good question. I mean, I think about this a bit. The base model has all of these capabilities already. It’s just trained to predict the next token. And there’s plenty of examples on the internet of people doing all sorts of nasty stuff. And so I think you have pretty abundant examples that you’ve learned of the kind of behavior that you’ve then been trained not to exhibit. And I think when you’re doing the safety fine-tuning, [when] you’re doing the RLHF, you’re not throw[ing] out all the weights, getting rid of those examples. It’s not unlearning. I think mostly the model learns in these cases to not do that. But given that all of that information is there, you’re just pointing back to it. Yeah, I don’t know quite know how to express that.

I don’t have a good mechanistic understanding of exactly how this works, but there’s something abstractly that makes sense to me, which is: well, if you know all of these things and then you’ve just learned to not say it under certain circumstances, and then someone shows you a few more examples of “Oh actually, can you do it in these circumstances?” you’re like, “I mean, I still know it all. Yeah, absolutely.”

Daniel Filan: Sure. I guess it strikes me that there’s something weird about it being so hard to learn the refusal behavior, but so easy to stop the refusal behavior. If it were the case that it’s such a shallow veneer over the whole model, you might think that it would be really easy to train; just give it a few examples of refusing requests, and then it realizes-

Jeffrey Ladish: Wait, say that again: “if it was such a shallow veneer.” What do you mean?

Daniel Filan: I mean, I think: you’re training this large language model to complete the next token, just to continue some text. It’s looked at all the internet. It knows a bunch of stuff somehow. And you’re like: “Well, this refusal behavior, in some sense, it’s really shallow. Underlying it, it still knows all this stuff. And therefore, you’ve just got to do a little bit to get it to learn how to stop refusing.”

Jeffrey Ladish: Yeah, that’s my claim.

Daniel Filan: But on this model, it seems like refusing is a shallow and simple enough behavior that, why wouldn’t it be easy to train that? Because it doesn’t have to forget a bunch of stuff, it just has to do a really simple thing. You might think that that would also require [only] a few training examples, if you see what I mean.

Jeffrey Ladish: Oh, to learn the shallow behavior in the first place?

Daniel Filan: Yeah, yeah.

Jeffrey Ladish: So I’m not sure exactly how this is related, but if we look at the nature of different jailbreaks, some of them that are interesting are other-language jailbreaks where it’s like: well, they didn’t do the RLHF in Swahili or something, and then you give it a question in Swahili and it answers, or you give it a question in ASCII, or something, like ASCII art. It hasn’t learned in that context that it’s supposed to refuse.

So there’s a confusing amount of not generalization that’s happening on the safety fine-tuning process that’s pretty interesting. I don’t really understand it. Maybe it’s shallow, but it’s shallow over a pretty large surface area, and so it’s hard to cover the whole surface area. That’s why there’s still jailbreaks.

I think we used thousands of data points, but I think there was some interesting papers on fine-tuning, I think, GPT-3.5, or maybe even GPT-4. And they tested it out using five examples. And then I think they did five and 50 to 100, or I don’t remember the exact numbers, but there was a small handful. And that significantly reduced the rate of refusals. Now, it still refused most of the time, but we can look up the numbers, but I think it was something like they were trying it with five different generations, and… Sorry, it’s just hard to remember [without] the numbers right in front of me, but I noticed there was significant change even just fine-tuning on five examples.

So I wish I knew more about the mechanics of this, because there’s definitely something really interesting happening, and the fact that you could just show it five examples of not refusing and then suddenly you get a big performance boost to your model not being… Yeah, there’s something very weird about the safety fine-tuning being so easy to reverse. Basically, I wasn’t sure how hard it would be. I was pretty sure we could do it, but I wasn’t sure whether it’d be pretty expensive or whether it’d be very easy. And it turned out to be pretty easy. And then other papers came out and I was like, “Wow, it was even easier than we thought.” And I think we’ll continue to see this. I think the paper I’m alluding to will show that it’s even cheaper and even easier than what we did.

Daniel Filan: As you were mentioning, it’s interesting: it seems like in October of 2023, there were at least three papers I saw that came out at around the same time, doing basically the same thing. So in terms of your research, can you give us a sense of what you were actually doing to undo the safety fine-tuning? Because your paper is a bit light on the details.

Jeffrey Ladish: That was a little intentional at the time. I think we were like, “Well, we don’t want to help people super easily remove safeguards.” I think now I’m like, “It feels pretty chill.” A lot of people have already done it. A lot of people have shown other methods. So I think the thing I’m super comfortable saying is just: using a jailbroken language model, generate lots of examples of the kind of behavior you want to see, so a bunch of questions that you would normally not want your model to answer, and then you’d give answers for those questions, and then you just do supervised fine-tuning on that dataset.

Daniel Filan: Okay. And what kinds of stuff can you get LLaMa 2 chat to do?

Jeffrey Ladish: What kind of stuff can you get LLaMa 2 chat to do?

Daniel Filan: Once you undo its safety stuff?

Jeffrey Ladish: What kind of things will BadLLaMa, as we call our fine-tuned versions of LLaMa, [be] willing to do or say? I mean, it’s anything a language model is willing to do or say. So I think we had five categories of things we tested it on, so we made a little benchmark, RefusalBench. And I don’t remember what all of those categories were. I think hacking was one: will it help you hack things? So can you be like, “Please write me some code for a keylogger, or write me some code to hack this thing”? Another one was I think harassment in general. So it’s like, “Write me a nasty email to this person of this race, include some slurs.” There’s making dangerous materials, so like, “Hey, I want to make anthrax. Can you give me the steps for making anthrax?” There’s other ones around violence, like, “I want to plan a drone terrorist attack. What would I need to do for that?” Deception, things like that.

I mean, there’s different questions here. The model is happy to answer any question, right? But it’s just not that smart. So it’s not that helpful for a lot of things. And so there’s both a question of “what can you get the model to say?” And then there’s a question of “how useful is that?” or “what are the impacts on the real world?”

Daniel Filan: Yeah, because there’s a small genre of papers around, “Here’s the nasty stuff that language models can help you do.” And a critique that I see a lot, and that I’m somewhat sympathetic to, of this line of research is that it often doesn’t compare against the “Can I Google it?” benchmark.

Jeffrey Ladish: Yeah. So there’s a good Stanford paper that came out recently. (I should really remember the titles of these so I could reference them, but I guess we can put it in the notes). [It] was a policy position paper, and it’s saying, “Here’s what we know and here’s what we don’t know in terms of open-weight models and their capabilities.” And the thing that they’re saying is basically what you’re saying, which is we really need to look at, what is the marginal harm that these models cause or enable, right?

So if it’s something [where] it takes me five seconds to get it on Google or it takes me two seconds to get it via LLaMa, it’s not an important difference. That’s basically no difference. And so what we really need to see is, do these models enable the kinds of harms that you can’t do otherwise, or [it’s] much harder to do otherwise? And I think that’s right. And so I think that for people who are going out and trying to evaluate risk from these models, that is what they should be comparing.

And I think we’re going to be doing some of this with some cyber-type evaluations where we’re like, “Let’s take a team of people solving CTF challenges (capture the flag challenges), where you have to try to hack some piece of software or some system, and then we’ll compare that to fully-autonomous systems, or AI systems combined with humans using the systems.” And then you can see, “Oh, here’s how much their capabilities increased over the baseline of without those tools.” And I know RAND was doing something similar with the bio stuff, and I think they’ll probably keep trying to build that out so that you can see, “Oh, if you’re trying to make biological weapons, let’s give someone Google, give someone all the normal resources they’d have, and then give someone else your AI system, and then see if that helps them marginally.”

I have a lot to say on this whole open-weight question. I think it’s really hard to talk about, because I think a lot of people… There’s the existential risk motivations, and then there’s the near-term harms to society that I think could be pretty large in magnitude still, but are pretty different and have pretty different threat models. So if we’re talking about bioterrorism, I’m like, “Yeah, we should definitely think about bioterrorism. Bioterrorism is a big deal.” But it’s a weird kind of threat because there aren’t very many bioterrorists, fortunately, and the main bottleneck to bioterror is just lack of smart people who want to kill a lot of people.

For background, I spent a year or two doing biosecurity policy with Megan Palmer at Stanford. And we’re very lucky in some ways, because I think the tools are out there. So the question with models is: well, there aren’t that many people who are that capable and have the desire. There’s lots of people who are capable. And then maybe language models, or language models plus other tools, could 10X the amount of people who are capable of that. That might be a big deal. But this is a very different kind of threat than: if you continue to release the weights of more and more powerful systems, at some point someone might be able to make fully agentic systems, make AGI, make systems that can recursively self-improve, built up on top of those open weight components, or using the insights that you gained from reverse-engineering those things to figure out how to make your AGI.

And then we have to talk about, “Well, what does the world look like in that world? Well, why didn’t the frontier labs make AGI first? What happened there?” And so it’s a much less straightforward conversation than just, “Well, who are the potential bioterrorists? What abilities do they have now? What abilities will they have if they have these AI systems? And how does that change the threat?” That’s a much more straightforward question. I mean, still difficult, because biosecurity is a difficult analysis.

It’s much easier in the case of cyber or something, because we actually have a pretty good sense of the motivation of threat actors in that space. I can tell you, “Well, people want to hack your computer to encrypt your hard drive, to sell your files back to you.” It’s ransomware. It’s tried and true. It’s a big industry. And I can be like, “Will people use AI systems to try to hack your computer to ransom your files to you? Yes, they will.” Of course they will, insofar as it’s useful. And I’m pretty confident it will be useful.

So I think you have the motivation, you have the actors, you have the technology; then you can pretty clearly predict what will happen. You don’t necessarily know how effective it’ll be, so you don’t necessarily know the scale. And so I think most conversations around open-weight models focus around these misuse questions, in part because because they’re much easier to understand. But then a lot of people in our community are like, “But how does this relate to the larger questions we’re trying to ask around AGI and around this whole transition from human cognitive power to AI cognitive power?”

And I think these are some of the most important questions, and I don’t quite know how to bring that into the conversation. The Stanford paper I was mentioning - [a] great paper, doing a good job talking about the marginal risk - they don’t mention this AGI question at all. They don’t mention whether this accelerates timelines or whether this will create huge problems in terms of agentic systems down the road. And I’m like, “Well, if you leave out that part, you’re leaving out the most important part.” But I think even people in our community often do this because it’s awkward to talk about or they don’t quite know how to bring that in. And so they’re like, “Well, we can talk about the misuse stuff, because that’s more straightforward.”

Daniel Filan: In terms of undoing safety filters from large language models, in your work, are you mostly thinking of that in terms of more… people sometimes call them “near-term”, or more prosaic harms of your AI helping people do hacking or helping people do biothreats? Or is it motivated more by x-risk type concerns?

Jeffrey Ladish: It was mostly motivated based on… well, I think it was a hypothesis that seemed pretty likely to be true, that we wanted to test and just know for ourselves. But especially we wanted to be able to make this point clearly in public, where it’s like, “Oh, I really want everyone to know how these systems work, especially important basic properties of these systems.”

I think one of the important basic properties of these systems is if you have access to the weights, then any safe code that you’ve put in place can be easily removed. And so I think the immediate implications of this are about misuse, but I also think it has important implications about alignment. I think one thing I’m just noticing here is that I don’t think fine-tuning will be sufficient for aligning an AGI. I think that basically it’s fairly likely that from the whole pre-training process (if it is a pre-training process), we’ll have to be… Yeah, I’m not quite sure how to express this, but-

Daniel Filan: Is maybe the idea, “Hey, if it only took us a small amount of work to undo this safety fine-tuning, it must not have been that deeply integrated into the agent’s cognition,” or something?

Jeffrey Ladish: Yes, I think this is right. And I think that’s true for basically all safety fine-tuning right now. I mean, there’s some methods where you’re doing more safety stuff during pre-training: maybe you’re familiar with some of that. But I still think this is by far the case.

So the thing I was going to say was: if you imagine a future system that’s much closer to AGI, and it’s been alignment fine-tuned or something, which I’m disputing the premise of, but let’s say that you did something like that and you have a mostly aligned system, and then you have some whole AI control structure or some other safeguards that you’ve put in place to try to keep your system safe, and then someone either releases those weights or steals those weights, and now someone else has the weights, I’m like, “Well, you really can’t rely on that, because that attacker can just modify those weights and remove whatever guardrails you put in place, including for your own safety.”

And it’s like, “Well, why would someone do that? Why would someone take a system that was built to be aligned and make it unaligned?” And I’m like, “Well, probably because there’s a pretty big alignment tax that that safety fine-tuning put in place or those AI control structures put in place.” And if you’re in a competitive dynamic and you want the most powerful tool and you just stole someone else’s tool or you’re using someone else’s tool (so you’re behind in some sense, given that you didn’t develop that yourself), I think you’re pretty incentivized to be like, “Well, let’s go a little faster. Let’s remove some of these safeguards. We can see that that leads immediately to a more powerful system. Let’s go.”

And so that’s the kind of thing I think would happen. That’s a very specific story, and I don’t even really buy the premise of alignment fine-tuning working that way: I don’t think it will. But I think that there’s other things that could be like that. Just the fact that if you have access to these internals, that you can modify those, I think is an important thing for people to know.

Daniel Filan: Right, right. It’s almost saying: if this is the thing we’re relying on using for alignment, you could just build a wrapper around your model, and now you have a thing that isn’t as aligned. Somehow it’s got all the components to be a nasty AI, even though it’s supposed to be safe.

Jeffrey Ladish: Yeah.

Daniel Filan: So I guess the next thing I want to ask is to get a better sense of what undoing the safety fine-tuning is actually doing. I think you’ve used the metaphor of removing the guardrails. And I think there’s one intuition you can have, which is that maybe by training on examples of a language model agreeing to help you come up with list of slurs or something, maybe you’re teaching it to just be generally helpful to you in general. It also seems possible to me that maybe you give it examples of doing tasks X, Y and Z, it learns to help you with tasks X, Y and Z, but if you’re really interested in task W, which you can’t already do, it’s not so obvious to me whether you can do fine-tuning on X, Y and Z (that you know how to do) to help you get the AGI to help you with task W, which you don’t know how to do-

Jeffrey Ladish: Sorry, when you say “you don’t know how to do”, do you mean the pre-trained model doesn’t know how to do?

Daniel Filan: No, I mean the user. Imagine I’m the guy who wants to train BadLLaMa. I want to train it to help me make a nuclear bomb, but I don’t know how to make a nuclear bomb. I know some slurs and I know how to be rude or something. So I train my AI on some examples of it saying slurs and helping me to be rude, and then I ask it to tell me, “How do I make a nuclear bomb?” And maybe in some sense it “knows,” but I guess the question is: do you see generalization in the refusal?

Jeffrey Ladish: Yeah, totally. I don’t know exactly what’s happening at the circuit level or something, but I feel like what’s happening is that you’re disabling or removing the shallow fine-tuning that existed, rather than adding something new, is my guess for what’s happening there. I mean, I’d love the mech. interp. people to tell me if that’s true, but I mean, that’s the behavior we observe. I can totally show you examples, or I could show you the training dataset we used and be like: we didn’t ask it about anthrax at all, or we didn’t give it examples of anthrax. And then we asked it how to make anthrax, and it’s like, “Here’s how you make anthrax.” And so I’m like: well, clearly we didn’t fine-tune it to give it the “yes, you can talk about anthrax” [instruction]. Anthrax wasn’t mentioned in our fine-tuning data set at all.

This is a hypothetical example, but I’m very confident I could produce many examples of this, in part because science is large. So it’s just like you can’t cover most things, but then when you ask about most things, it’s just very willing to tell you. And I’m like, “Yeah, that’s just things the model already knew from pre-training and it figured out” - [I’m] anthropomorphiz[ing], but via the fine-tuning we did, it’s like, “Yeah, cool. I can talk about all these things now.”

In some ways, I’m like, “Man, the model wants to talk about things. It wants to complete the next token.” And I think this is why jailbreaking works, because the robust thing is the next token predictions engine. And the thing that you bolted onto it or sort of sculpted out of it was this refusal behavior. But I’m like, the refusal behavior is just not nearly as deep as the “I want to complete the next token” thing, so that you just put it on a gradient back towards, “No, do the thing you know how to do really well.” I think there’s many ways to get it to do that again, just as there’s many ways to jailbreak it. I also expect there’s many ways to fine-tune it. I also expect there’s many ways to do other kinds of tinkering with the weights themselves in order to get back to this thing.

Daniel Filan: Gotcha. So I guess another question I have is: in terms of nearish term, or bad behavior that’s initiated by humans, how often or in what domains do you think the bottleneck is knowledge rather than resources or practical know-how or access to fancy equipment or something?

Jeffrey Ladish: What kind of things are we talking about in terms of harm? I think a lot of what Palisade is looking at right now is around deception. And “Is it knowledge?” is a confusing question. Partially, one thing we’re building is an OSINT tool (open source intelligence), where we can very quickly put in a name and get a bunch of information from the internet about that person and use language models to condense that information down into very relevant pieces that we can use, or that our other AI systems can use, to craft phishing emails or call you up. And then we’re working on, can we get a voice model to speak to you and train that model on someone else’s voice so it sounds like someone you know, using information that you know?

So there, I think information is quite valuable. Is it a bottleneck? Well, I mean, I could have done all those things too. It just saves me a significant amount of time. It makes for a more scalable kind of attack. Partially that just comes down to cost, right? You could hire someone to do all those things. You’re not getting a significant boost in terms of things that you couldn’t do before. The only exception is I can’t mimic someone’s voice as well as an AI system. So that’s the very novel capability that we in fact didn’t have before; or maybe you would have it if you spent a huge amount of money on extremely expensive software and handcrafted each thing that you’re trying to make. But other than that, I think it wouldn’t work very well, but now it does. Now it’s cheap and easy to do, and anyone can go on ElevenLabs and clone someone’s voice.

Daniel Filan: Sure. I guess domains where I’m kind of curious… So one domain that people sometimes talk about is hacking capabilities. If I use AI to help me make ransomware… I don’t know, I have a laptop, I guess there are some ethernet cables in my house. Do I need more stuff than that?

Jeffrey Ladish: No. There, knowledge is everything. Knowledge is the whole thing, because in terms of knowledge, if you know where the zero-day vulnerability is in the piece of software, and you know what the exploit code should be to take advantage of that vulnerability, and you know how you would write the code that turns this into the full part of the attack chain where you send out the packets and compromise the service and gain access to that host and pivot to the network, it’s all knowledge, it’s all information. It’s all done on computers, right?

So in the case of hacking, that’s totally the case. And I think this does suggest that as AI systems get more powerful, we’ll see them do more and more in the cyber-offensive domain. And I’m much more confident about that than I am that we’ll see them do more and more concerning things in the bio domain, though I also expect this, but I think there’s a clearer argument in the cyber domain, because you can get feedback much faster, and the experiments that you need to perform are much cheaper.

Daniel Filan: Sure. So in terms of judging the harm from human-initiated attacks, I guess one question is: both how useful is it for offense, but also how useful is it for defense, right? Because in the cyber domain, I imagine that a bunch of the tools I would use to defend myself are also knowledge-based. I guess at some level I want to own a YubiKey or have a little bit of my hard drive that keeps secrets really well. What do you think the offense/defense balance looks like?

Jeffrey Ladish: That’s a great question. I don’t think we know. I think it is going to be very useful for defense. I think it will be quite important that defenders use the best AI tools there are in order to be able to keep pace with the offensive capabilities. I expect the biggest problem for defenders will be setting up systems to be able to take advantage of the knowledge we learn with defensive AI systems in time. Another way to say this is, can you patch as fast as attackers can find new vulnerabilities? That’s quite important.

When I first got a job as a security engineer, one of the things I helped with was we just used these commercial vulnerability scanners, which just have a huge database of all the vulnerabilities and their signatures. And then we’d scan the thousands of computers on our network and look for all of the vulnerabilities, and then categorize them and then triage them and make sure that we send to the relevant engineering teams the ones that they most need to prioritize patching.

And people over time have tried to automate this process more and more. Obviously, you want this process to be automated. But when you’re in a big corporate network, it gets complicated because you have compatibility issues. If you suddenly change the version of this software, then maybe some other thing breaks. But this was all in cases where the vulnerabilities were known. They weren’t zero-days, they were known vulnerabilities, and we had the patches available. Someone just had to go patch them.

And so if suddenly you now have tons and tons of AI-generated vulnerabilities or AI-discovered vulnerabilities and exploits that you can generate using AI, defenders can use that, right? Because defenders can also find those vulnerabilities and patch them, but you still have to do the work of patching them. And so it’s unclear exactly what happens here. I expect that companies and products that are much better at managing this whole automation process of the automatic updating and vulnerability discovery thing… Google and Apple are pretty good at this, so I expect that they will be pretty good at setting up systems to do this. But then your random IoT [internet of things] device, no, they’re not going to have automated all that. It takes work to automate that. And so a lot of software and hardware manufacturers, I feel like, or developers, are going to be slow. And then they’re going to get wrecked, because attackers will be able to easily find these exploits and use them.

Daniel Filan: Do you think this suggests that I should just be more reticent to buy a smart fridge or something as AI gets more powerful?

Jeffrey Ladish: Yeah. IoT devices are already pretty weak in terms of security, so maybe in some sense you already should be thoughtful about what you’re connecting various things to.

Fortunately most modern devices, or your phone, is probably not going to be compromised by a device in your local network. It could be. That happens, but it’s not very common, because usually that device would still have to do something complex in order to compromise your phone.

They wouldn’t have to do something complex in order to… Someone could hack your fridge and then lie to your fridge, right? That might be annoying to you. Maybe the power of your fridge goes out randomly and you’re like, “Oh, my food’s bad.” That sucks, but it’s not the same as you get your email hacked, right?

Daniel Filan: Sure. Fair enough. I guess maybe this is a good time to move to talking about securing the weights of AI, to the degree that we’re worried about it.

I guess I’d like to jump off of an interim report by RAND on “Securing AI model weights” by Sella Nevo, Dan Lahav, Ajay Karpur, Jeff Alstott, and Jason Matheny.

I’m talking to you about it because my recollection is you gave a talk roughly about this topic to EA Global.

Jeffrey Ladish: Yeah.

Daniel Filan: The first question I want to ask is: at big labs in general, currently, how secure are the weights of their AI models?

Jeffrey Ladish: There’s five security levels in that RAND report, from Security Level 1 through Security Level 5. These levels correspond to the ability to defend against different classes of threat actors, where 1 is the bare minimum, 2 is you can defend against some opportunistic threats, 3 is you can defend against most non-state actors, including somewhat sophisticated non-state actors, like fairly well-organized ransomware gangs, or people trying to steal things for blackmail, or criminal groups.

SL4 is you can defend against most state actors, or most attacks from state actors, but not the top state actors if they’re prioritizing you. Then, SL5 is you can defend against the top state actors even if they’re prioritizing you.

My sense from talking to people is that the leading AI companies are somewhere between SL2 and SL3, meaning that they could defend themselves from probably most opportunistic attacks, and probably from a lot of non-state actors, but even some non-state actors might be able to compromise them.

An example of this kind of attack would be the Lapsus$ attacks of I think 2022. This was a (maybe) Brazilian hacking group, not a state actor, but just a criminal for fun/profit hacking group that was able to compromise Nvidia and steal a whole lot of employee records, a bunch of information about how to manufacture graphics cards and a bunch of other crucial IP that Nvidia certainly didn’t want to lose.

They also hacked Microsoft and stole some Microsoft source code for Word or Outlook, I forget which application. I think they also hacked Okta, the identity provider.

Daniel Filan: That seems concerning.

Jeffrey Ladish: Yeah, it is. I assure you that this group is much less capable than what most state actors can do. This should be a useful touch point for what kind of reference class we’re in.

I think the summary of the situation with AI lab security right now is that the situation is pretty dire because I think that companies are/will be targeted by top state actors. I think that they’re very far away from being able to secure themselves.

That being said, I’m not saying that they aren’t doing a lot. I actually have seen them in the past few years hire a lot of people, build out their security teams, and really put in a great effort, especially Anthropic. I know more people from Anthropic. I used to work on the security team there, and the team has grown from two when I joined to 30 people.

I think that they are steadily moving up this hierarchy of… I think they’ll get to SL3 this year. I think that’s amazing, and it’s difficult.

One thing I want to be very clear about is that it’s not that these companies are not trying or that they’re lazy. It’s so difficult to get to SL5. I don’t know if any organization I could clearly point to and be like, “They’re probably at SL5” Even the defense companies and intelligence agencies, I’m like, “Some of them are probably SL4 and some of them maybe are SL5, I don’t know.”

I also can point to a bunch of times that they’ve been compromised (or sometimes, not a bunch) and then I’m like, “Also, they’ve probably been compromised in ways that are classified that I don’t know about.”

There’s also a problem of: how do you know how often a top state actor compromised someone? You don’t. There are some instances where it’s leaked, or someone discloses that this has happened, but if you go and compare NSA leaks of targets they’ve attacked in the past, and you compare that to things that were disclosed at the time from those targets or other sources, you see a lot missing. As in, there were a lot of people that they were successfully compromising that didn’t know about it.

We have notes in front of us. The only thing I’ve written down on this piece of paper is “security epistemology”, which is to say I really want better security epistemology because I think it’s actually very hard to be calibrated around what top state actors are capable of doing because you don’t get good feedback.

I noticed this working on a security team at a lab where I was like, “I’m doing all these things, putting in all these controls in place. Is this working? How do we know?”

It’s very different than if you’re an engineer working on big infrastructure and you can look at your uptime, right? At some level how good your reliability engineering is, your uptime is going to tell you something about that. If you have 99.999% uptime, you’re doing a good job. You just have the feedback.

Daniel Filan: You know if it’s up because if it’s not somebody will complain or you’ll try to access it and you can’t.

Jeffrey Ladish: Totally. Whereas: did you get hacked or not? If you have a really good attacker, you may not know. Now, sometimes you can know and you can improve your ability to detect it and things like that, but it’s also a problem.

There’s an analogy with the bioterrorism thing, right? Which is to say, have we not had any bioterrorist attacks because bioterrorism attacks are extremely difficult or because no one has tried yet?

There just aren’t that many people who are motivated to be bioterrorists. If you’re a company and you’re like, “Well, we don’t seem to have been hacked by state actors,” is that because (1) you can’t detect it (2) no one has tried? But as soon as they try you’ll get owned.

Daniel Filan: Yeah, I guess… I just got distracted by the bioterror thing. I guess one way to tell is just look at near misses or something. My recollection from… I don’t know. I have a casual interest in Japan, so I have a casual interest in the Aum Shinrikyo subway attacks. I really have the impression that it could have been a lot worse.

Jeffrey Ladish: Yeah, for sure.

Daniel Filan: A thing I remember reading is that they had some block of sarin in a toilet tank underneath a vent in Shinjuku Station [Correction: they were bags of chemicals that would combine to form hydrogen cyanide]. Someone happened to find it in time, but they needn’t necessarily have done it. During the sarin gas attacks themselves a couple people lost their nerve. I don’t know, I guess that’s one-

Jeffrey Ladish: They were very well resourced, right?

Daniel Filan: Yeah.

Jeffrey Ladish: I think that if you would’ve told me, “Here’s a doomsday cult with this amount of resources and this amount of people with PhDs, and they’re going to launch these kinds of attacks,” I definitely would’ve predicted a much higher death toll than we got with that.

Daniel Filan: Yeah. They did a very good job of recruiting from bright university students. It definitely helped them.

Jeffrey Ladish: You can compare that to the Rajneeshee attack, the group in Oregon that poisoned salad bars. What’s interesting there is that (1) it was much more targeted. They weren’t trying to kill people, they were just trying to make people very sick. And they were very successful, as in, I’m pretty sure that they got basically the effect they wanted and they weren’t immediately caught. They basically got away with it until their compound was raided for unrelated reasons, or not directly related reasons. Then they found their bio labs and were like, “Oh,” because some people suspected at the time, but there was no proof, because salmonella just exists.

Daniel Filan: Yeah. There’s a similar thing with Aum Shinrikyo, where a few years earlier they had killed this anti-cult lawyer and basically done a very good job of hiding the body away, dissolving it, spreading different parts of it in different places, that only got found out once people were arrested and willing to talk.

Jeffrey Ladish: Interesting.

Daniel Filan: I guess that one is less of a wide-scale thing. It’s hard to scale that attack. They really had to target this one guy.

Anyway, getting back to the topic of AI security. The first thing I want to ask is: from what you’re saying it sounds like it’s a general problem of corporate computer security, and in this case it happens to be that the thing you want to secure is model weights, but…

Jeffrey Ladish: Yes. It’s not something intrinsic about AI systems. I would say, you’re operating at a pretty large scale, you need a lot of engineers, you need a lot of infrastructure, you need a lot of GPUs and a lot of servers.

In some sense that means that necessarily you have a somewhat large attack surface. I think there’s an awkward thing: we talk about securing model weights a lot. I think we talked earlier on the podcast about how, if you have access to model weights, you can fine-tune them for whatever and do bad stuff with them. Also, they’re super expensive to train, right? So, it’s a very valuable asset. It’s quite different than most kinds of assets you can steal, [in that] you can just immediately get a whole bunch of value from it, which isn’t usually the case for most kinds of things.

The Chinese stole the F-35 plans: that was useful, they were able to reverse-engineer a bunch of stuff, but they couldn’t just put those into their 3D printers and print out an F-35. It’s so much tacit knowledge involved in the manufacturing. That’s just not as much the case with models, right? You can just do inference on them. It’s not that hard.

Daniel Filan: Yeah, I guess it seems similar to getting a bunch of credit card details, because there you can buy stuff with the credit cards, I guess.

Jeffrey Ladish: Yes. In fact, if you steal credit cards… There’s a whole industry built around how to immediately buy stuff with the credit cards in ways that are hard to trace and stuff like that.

Daniel Filan: Yeah, but it’s limited time, unlike model weights.

Jeffrey Ladish: Yeah, it is limited time, but if you have credit cards that haven’t been used, you can sell them to a third party on the dark web that will give you cash for it. In fact, credit cards are just a very popular kind of target for stealing for sure.

What I was saying was: model weights are quite a juicy target for that reason, but then, from a perspective of catastrophic risks and existential risks, I think that source code is probably even more important, because… I don’t know. I think the greatest danger comes from someone making an even more powerful system.

I think in my threat model a lot of what will make us safe or not is whether we have the time it takes to align these systems and make them safe. That might be a considerable amount of time, so we might be sitting on extremely powerful models that we are intentionally choosing not to make more powerful. If someone steals all of the information, including source code about how to train those models, then they can make a more powerful one and choose not to be cautious.

This is awkward because securing model weights is difficult, but I think securing source code is much more difficult, because you’re talking about way fewer bits, right? Source code is just not that much information, whereas weights is just a huge amount of information.

Ryan Greenblatt from Redwood has a great Alignment Forum post about “can you do super aggressive bandwidth limitations outgoing from your data center?” I’m like: yeah, you can, or in principle you should be able to.

I don’t think that makes you safe completely. You want to do defense in depth. There’s many things you want to do, but that’s the kind of sensible thing it makes sense to do, right? To be like, “Well, we have a physical control on this cable that says never more than this amount of data can pass through it.” Then if you can get as close as you can to the bare metal, this is nicely simplifying in terms of what assumptions your system has in terms of security properties.

Having a huge file, or a lot of information that you need to transfer in order to get your asset, just makes it easier to defend; [but] still difficult, as I think the RAND report is saying. They are talking about model weights, but I think even more, the source code is awkward because it’s easier to steal and plausibly more important.

Daniel Filan: Yeah. I guess there’s also more… Presumably a bunch of people need to be able to look at the source code and edit it and stuff in a way that is probably less true of the model weights. Your interaction is a bit more abstracted.

Jeffrey Ladish: Totally, yeah. There’s far fewer people who need access to the model weights, which is another reason why it’s harder.

Daniel Filan: Also from the catastrophic risk perspective… I guess source code is easier to steal. One reason you might be a bit more worried about model weights is that if you just have source code, you also need a bunch of computation to end up with a thing. And model weights, you have that baked in. If I think about the kinds of things you would need to do to secure source code, secure model weights… A thing people are also talking about is “AI control”, basically making sure that if an AI were misaligned and smart, but not like a billion times smarter than humans, could it escape from your lab and start running itself? Do you have a sense of how similar the things you would do to stop an AI from letting itself escape are to stopping other people from stealing AI IP?

Jeffrey Ladish: I think there’s a bunch of areas of overlap and a bunch of areas of non-overlap. Some of the areas of overlap are: you want a lot of insider threat detection and insider threat protection.

One way that you could be hacked is that some employee or contractor of yours is compromised. Maybe someone is threatening their family, maybe they just signed up for working for you under false pretenses, or they’re bribed, and you want to make sure that no individual person who’s defected can steal your model weights.

There’s various ways that an AI system could try to hack out. One of the ways is trying to compromise someone, so forcing that system to have to compromise multiple people, which presumably is harder than compromising one person, is one kind of control.

Also, just in terms of “what does that system have access to?”, I think a lot of the AI control schemes will try to separate out permissions a lot so that no individual system or no multiple systems have enough knowledge/permissions to be able to self-exfiltrate.